middle, and high income). When explored, the model was established to be inequitable, particularly for low-income households.

The model was highly likely to miscalculate means and the corresponding contributions, particularly for low-income households.

The public image of SHIF may have suffered during recent political unrest.

we recommended focusing on risk management in the short term, rather than on improving model accuracy.

Africa Uncensored

SHA's flawed AI system overcharges those with least

May 4, 2026

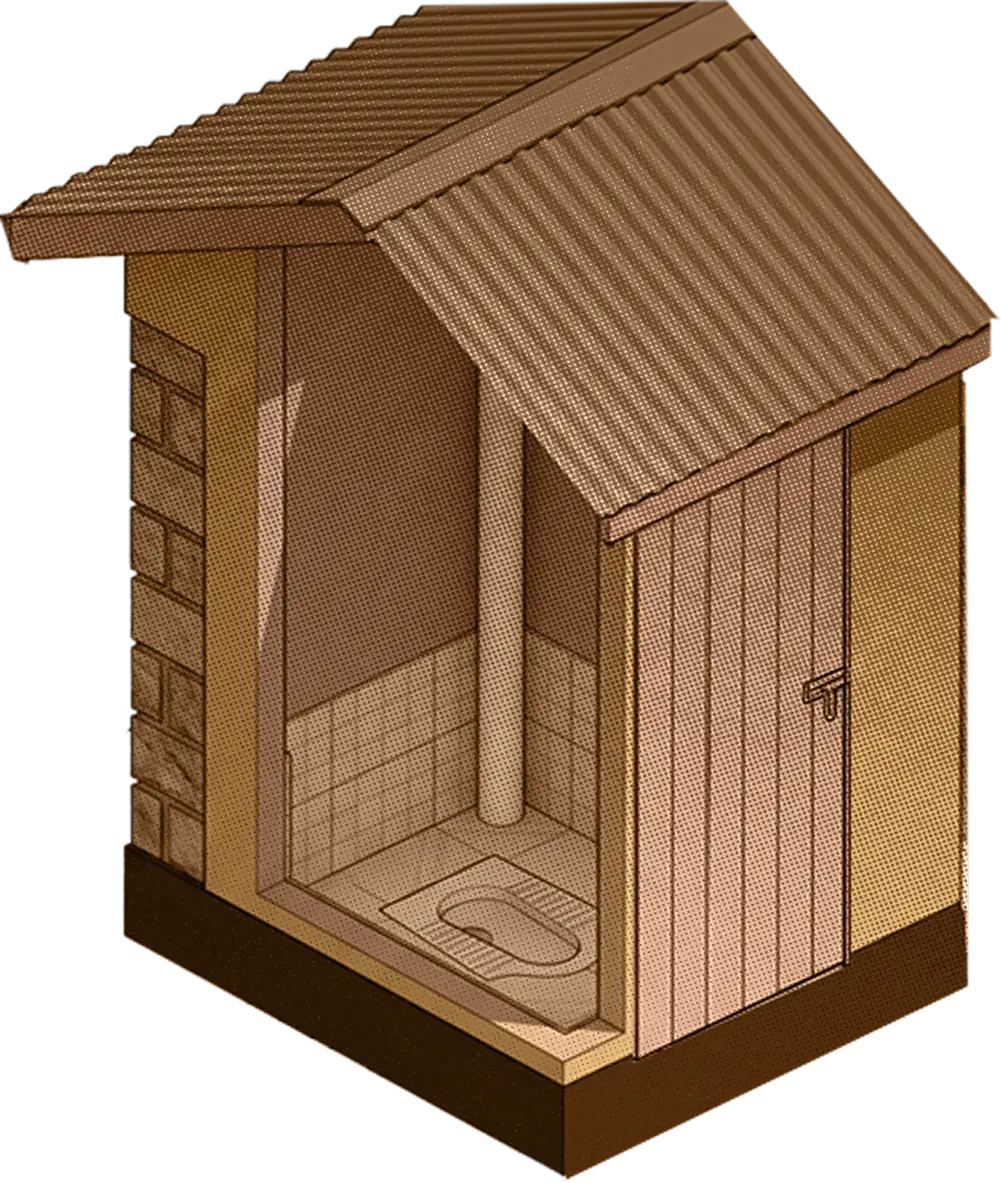

Every day, Grace* sits in people's homes and asks them questions that range from the odd to the intrusive. What type of toilet do you use? What is your roof made of? Do you own a radio?

She helps the occupants enter the answers to these questions — pit latrine, iron sheet roof, no radio — into a digital questionnaire on their phones. Since there are 30 questions, it can be a lengthy process. People are often confused, uncomfortable even. Some feel like they are under investigation. But finally, when the form is complete, a number comes back. That number, calculated by an artificial intelligence model, is the sum the household must pay that year for public health insurance.

Grace, mother of ten, has spent more than 30 years volunteering, first with her church and more recently as a community health promoter — one of roughly 100,000 government volunteers embedded across Kenya to deliver basic health services.

She helps dozens of people each week register with the Social Health Authority (SHA), the centerpiece of President William Ruto's sweeping overhaul of Kenya's public health insurance system. She works in Embakasi, a crowded mix of informal settlements on the eastern edge of Nairobi, where she visits hawkers, day labourers and the unemployed.

"We grew up trying to make ends meet," she said. "I started struggling at a young age. So when I see someone suffering it touches me."

Many of the people she sees are living in a way she recalls from her youth. Some have no savings, others live on the street. These are exactly the people the government promised would benefit the most from the AI-driven health reforms: those with the lowest incomes, who were supposed to be charged the minimum premium, or have their premiums covered entirely. "They thought it was something that would help them," Grace said.

The reality Grace sees on the ground is entirely different.

The people she registers are some of the poorest in Nairobi, yet the majority are charged premiums they cannot afford. She has watched families struggling to feed themselves charged a sum they could never hope to afford. She has also seen critically ill people who cannot get treatment because they have not been able to pay the amount the AI system says they should.

"People are dying, people are suffering," Grace said.

Grace has found herself on the frontlines of a radical experiment: using AI to determine how much Kenyans should pay for health care.

Since its launch, SHA has been met with a barrage of criticism for setting unaffordable or incomprehensible premiums. Africa Uncensored, in collaboration with the investigative newsroom Lighthouse Reports and The Guardian, has spent months trying to find out why. Reporters used a combination of access-to-information requests and government data to reconstruct how SHA designed the means testing system, spoke to inside sources about its implementation, and obtained a previously unreported document describing where it went wrong.

The government says that SHA's means testing tool is a fair and objective way to calculate how much people need to pay for access to healthcare. But our findings reveal in unprecedented detail how, from the start, it was designed to systematically overcharge the poorest Kenyans, while undercharging the wealthiest. Consultants brought in to work on the system described it as inequitable in ways which fundamentally could not be corrected. At best, they proposed waves of tinkering which they said could lead to marginal improvements.

But the government pressed ahead anyway.

Across the world, algorithms now determine who should receive a loan for a house, be hired for a job, or sentenced for a crime. Increasingly, AI is promoted as the answer to previously intractable social problems. Often it is used to make decisions about particular groups of people. In Kenya, for instance, AI systems are used to determine eligibility for student loans and food subsidies. But SHA is different. It is the first system intended to assess virtually every household in the country. And, given how it governs access to healthcare, its decisions can have life or death consequences.

An opaque formula

In October 2023, President Ruto addressed a crowd at a football stadium in the highlands town of Kericho, to address a key election pledge. Every citizen, he announced, would soon have access to affordable healthcare. "No Kenyan will be left behind."

Parliament had just passed a law establishing SHA. It replaced the National Health Insurance Fund, an aging bureaucracy that had existed in various forms since 1966 and had fallen deeply into debt. The old system placed almost all of the national health burden on salaried employees, who paid for it via deductions, while a small number of informal workers bought their way in for a flat fee of 500 shillings a month.

Under the new system, SHA registration would be mandatory and annual health insurance contributions would depend on income, with those who earned more paying more and those earning less paying less.

The problem is that the Kenyan government does not know what most of its people earn. The formal sector, representing salaried workers with payslips, accounts for just 17 percent of the work force. The other 83 percent work in the informal economy, with no reliable income records.

Some months after Ruto's speech, Dr. Brian Lishenga found himself in a conference room in Naivasha, listening to government officials and international donors discussing how to solve that problem. As Chairman of the Rural and Urban Private Hospitals Association of Kenya, Lishenga had a front row seat at SHA's creation. He wanted to understand how the government planned to get the country's tens of millions of informal workers to pay into the system.

"How are you going to determine contributions for this big pocket?" he wondered. "That's the first time I hear 'proxy means testing' — and I got curious."

Although he didn't know it at the time, what Lishenga was hearing was a longstanding — and controversial — idea that economists have been toying with since the 1990s. It offers a way of solving hard questions about how much people earn. Instead of asking for an income figure, the theory goes, you collect other data — what their house is made of, what they own, where they live and work — and use this to predict their income.

The approach has spread. Across Africa, Asia and Latin America, proxy means testing algorithms have become a popular way to determine which households are 'poor enough' to receive cash transfers, food subsidies and other social benefits, often with the backing of the World Bank or other international donors.

In March 2024, the government published a formula in the Kenyan Gazette, enshrining the new tool in law. But behind the forest of statistical symbols and mathematical notation, the true meaning was unclear.

"It could as well have been written in ancient Egyptian," Lishenga said. "So, I don't think anyone paid attention to this formula, because it was meant to be consumed by mathematicians and statisticians and, you know, technical people. But in a real sense, that formula had a huge impact on real people's lives."

Inside the system

The published formula provided little insight into how the system was actually meant to work, referring only broadly to "household-specific and individual-specific indicators." But what these are, and how they would be used, was not publicly disclosed.

Africa Uncensored and Lighthouse Reports submitted requests to SHA under the Access to Information Act, a law that gives Kenyan citizens the right to see information held by government agencies. When SHA failed to respond, we complained to the Ombudsman. Forced to comply, SHA sent us a 10-page document listing the indicators, and explaining how they could be converted into numbers to feed into a machine learning system. This system would then predict people's incomes. It did this by comparing the inputs it received to an older dataset, a survey of Kenyan households carried out in 2021. The indicators — floor material, marital status, owning a radio, and 40 other things intended to capture how people live — are what Grace, the community health worker, fills into the form each day for her clients.

We obtained a copy of the 2021 survey from the Kenyan Bureau of Statistics. Armed with this and the list of indicators, we were able to rebuild, and test, the system which SHA had disclosed to us. The formula assigns each indicator a value and then adds them together to estimate a family's income, like a receipt. We ran a series of tests to see how its predictions compared to the actual data it was trained on.

What we found was stark. The formula contained an inbuilt flaw: it would overpredict poor people's incomes and underpredict rich people's incomes. It misclassified many actually poor people as living above the poverty line, meaning their SHA contributions ended up higher than they should be.

David Khaoya was one of the early developers of the means testing system. In 2023, before the formula was published in the Gazette, he was working as a health economist for Palladium, a consultancy funded by USAID that advised the Ministry of Health during the initial design of the tool. He described how, faced with the formula's inevitable flaw, its designers needed to make a choice. They could try to correctly classify poor households, but this meant underestimating the income of rich ones. Or instead they could focus on correctly classifying rich households, which meant increasing the number of poor households whose income is overestimated.

Faced with that choice, Khaoya said, the government decided to prioritise the wealthy being correctly assessed, even if that meant overcharging the poor.

"If you identify a richer person as poor and therefore ask him to pay less, this person will never own up and say look, I'm actually supposed to be paying more," Khaoya said.

Instead of this, he suggested, it made sense to minimise the number of rich people being identified as poor, knowing that this meant more poor people would be identified as rich. But, he added, these wrongly-classified poor people would be able to appeal and get the mistake corrected.

"Highly likely to miscalculate"

As SHA's October 2024 rollout neared, however, a new report into the system that Khaoya worked on painted a bleak picture of how this formula would play out in real life. We obtained a copy of this report, the contents of which have not been revealed to the public until now.

The report, by international data consultancy IDinsight, followed months of scoping the AI system that SHA had built.

SHA's system, the report found, was "inequitable, particularly for low-income households". The survey used as a basis for determining wealth was flawed because it "over-represents middle-income households and has very few data points from poverty pockets." It was also "out-of-date with the current socio-economic condition," given the "multiple economic shocks" that had affected Kenyans between 2020, when the data was gathered, and 2024. Unless new training data was used, the report found that the system was "highly likely to miscalculate means and the corresponding contributions, particularly for low-income households".

No new training data was available, however. So the consultants recommended "focusing on risk management in the short term." This meant tinkering with the system to try to get it to behave differently, while "creat[ing] a mechanism to allow Kenyans to contest unfair and inequitable predictions from the model."

After months of work, the consultants delivered their final verdict at the end of September, just days before the system's rollout. They had carried out their own data survey and they said they had found a way to mitigate the worst outcomes, if the system was adjusted with various tweaks. But by their own estimate this was limited to "a slight overall increase in model performance, with a 7% improvement in predictions for low-income households."

"We do not guarantee overall model improvement for immediate rollout," they concluded. And in the absence of "nationally representative current data" they also warned that they could not provide "values for accuracy, specificity, or sensitivity": in other words, they were not in a position to say exactly how their new model would perform.

We ran a series of tests on the adjusted AI system proposed in the consultants' report. Our findings were striking. The changes did not improve the system's accuracy. In some cases, they actually made things worse. More than half of poor households were now significantly overcharged. Some, which should have been charged the lowest rate of 300 shillings, were charged 800 or more.

These 100 dots each represent a Kenyan household, arranged by how much they earn.

40 of these households live at or below the poverty line.

Less than half are predicted correctly, placed in the low income band.

The majority have their income overpredicted. Many are placed in the middle income band.

Higher income households follow a different pattern.

Many have their incomes underpredicted.

A household pays hunderds more than it should. A wealthy household pays thousands less.

According to their report, IDinsight delivered the code for the new system to the Ministry of Health by the end of September 2024. But implementing it was up to Safaricom and SHA’s employees. Both organisations ignored our requests for information about if, how and when the new system was put into operation.

It remains unclear why, if it had adopted the new system, SHA told us in November last year that it was still using the old one.

“Kenyans have a right to be told exactly what’s going on,” Linda Bonyo, the founder of the Lawyer’s Hub, a digital rights organisation working on Artificial Intelligence policy in Africa, said. “That's a fundamental right within the Constitution of Kenya.”

Sources we spoke to, who were present at discussions around SHA’s rollout, described how the concerns of multiple stakeholders were overridden in the rush to get the agency up and running.

According to one source, both the International Labour Organization and the World Bank cautioned that the income prediction system would struggle to capture the complex and often unpredictable earnings patterns of everyday Kenyans, raising the risk of overcharging or undercharging households. A former UN employee with direct knowledge of its consultations confirmed that the organisation had advised against deploying the system in its current form.

Warnings came from the inside, too. According to one source, the means testing system met resistance from within the Ministry of Health, but pressure was coming from “a very high place” to implement it. Overhauling the financing of a complex healthcare system was a "mammoth project,” this source said, the work of years. But President Ruto wanted it done in months.

“It was bulldozed through,” the person said.

One engineer involved in building the model said that, as figures began to come in for how much money the system would bring in, the government came back with a request. “Is there a way we can make this have a higher yield so that people contribute more?”

"An experiment that has failed"

In a small Catholic church, its walls painted the colour of grass, Grace, the community health promoter, recounts how the system's predictions collide with real lives – sometimes with fatal consequences.

According to Grace, the majority of the people she helps fill out the means testing form are assigned a health premium of 650 shillings a month. For those living below the poverty line, which is the case for many of her clients, this can mean 10 percent or more of their income. For those classified as "food poor" by the Kenyan Bureau of Statistics, meaning they cannot afford to meet basic daily calorie needs, it is nearer to 20 percent.

Kenyans who do not have private insurance and do not pay their SHA premiums risk being turned away from health facilities or confronted with steep hospital bills. For some, this has meant they can no longer access life-saving medical treatment. "People are dying at home, many people have been unable to go to hospital," Grace said.

"Will they pay SHA, or pay for food, or pay for the small house they live in?"

Since October 2024, millions of people have had their premiums calculated by SHA's means testing system. The response has been swift and angry. On Facebook, Tiktok and other social media platforms, Kenyans have flooded comment sections with accounts of charges they cannot pay.

"From struggling to pay Ksh 500 previously to being billed Ksh. 1,030," one wrote. Another questioned the system's design: "Owning a smartphone, purchasing an airtime, sleeping in a iron sheet roofed house cannot give the true being of one." A third person, identifying herself as an unemployed single mother, wrote that her monthly contribution had been set at 3,500 Kenyan shillings, 42,000 a year. "God have mercy on me," she wrote. "Somebody help me out."

By some accounts, SHA is teetering on the edge of disaster. Of the more than 20 million people registered, only 5 million are regularly paying their premiums. Some hospitals are reporting large deficits as promised reimbursements from SHA remain unpaid. Other hospitals have been implicated in a fraud scheme said to have cost the agency more than 12.7 billion shillings. In March, former Deputy President Rigathi Gachagua declared that "SHA will collapse in another six months" — an assertion rejected by President Ruto.

Dr. Lishenga, who first heard of proxy means testing at the conference in Naivasha, has emerged as one of the system's most vocal critics.

"This is an experiment that has failed," he said. "It's a really poor tool for identifying poor households. It's a great tool for helping the government run away from responsibility. A very great tool for that."